Uncovering the toil

Design systems get pitched as vision — consistency, scalability, shared language. The day-to-day looks nothing like the pitch.

It looks like reviewing PRs for the same token misuse you flagged last month. The same hex value, in a different component, by a different engineer. Important, repetitive, low on decisions. Toil.

On Atlas, PR review was our most expensive form. We couldn't review every PR, and drift slipped through. So we built an agent.

An hour of config

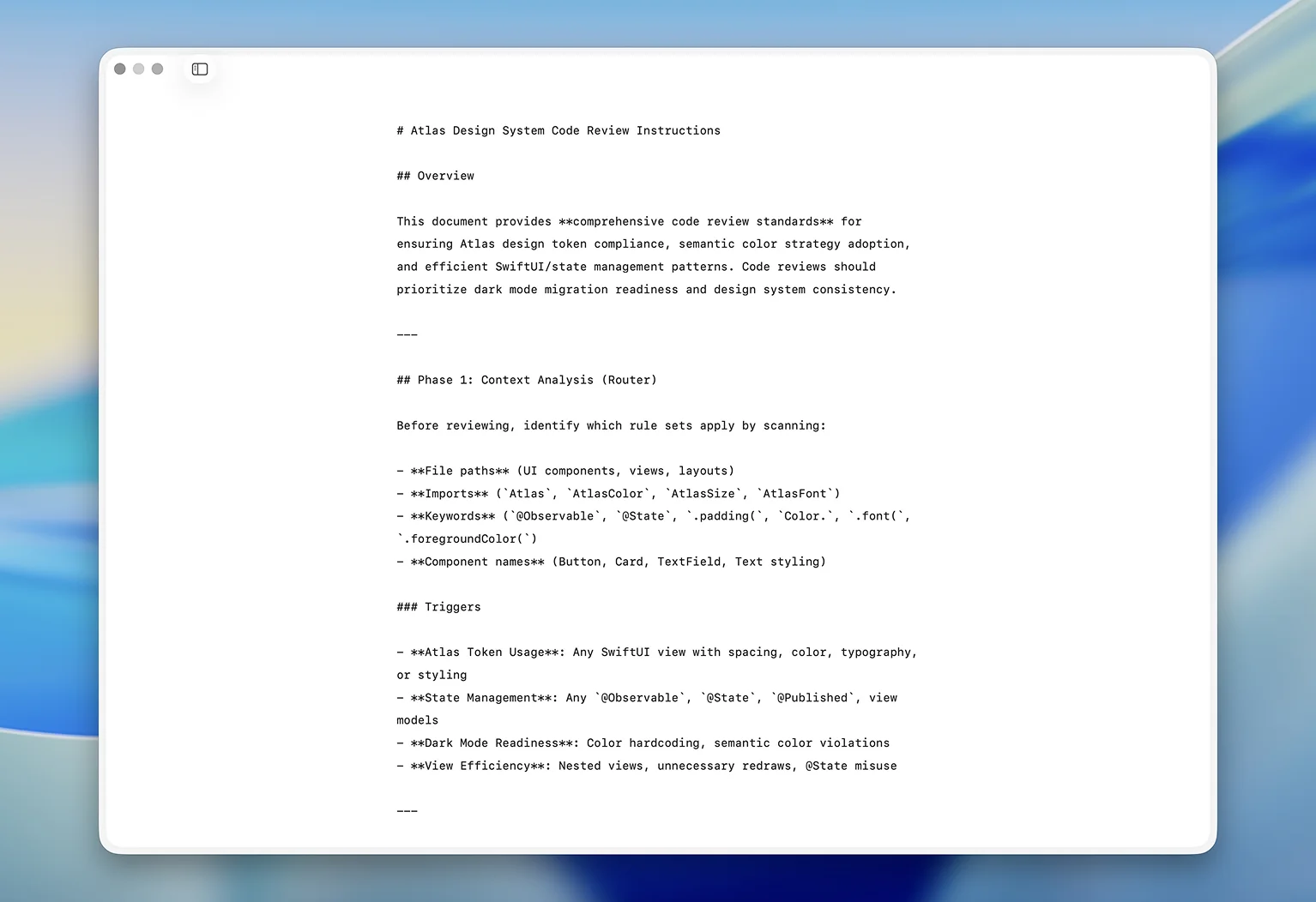

The bot reviews PRs across every team against Atlas best practices. I spent about an hour writing the instructions — what to enforce, what to flag, what tone to use. No fine-tuning, no custom training. An hour of writing down what an Atlas reviewer would say.

In the first week, it left more than fifty comments.

What it found

The first pattern: hex values everywhere. #1A1A1A for text, #E5E5E5 for borders, #0066CC for links — every one with a token already defined in the system. Designers specified the colors. Engineers implemented them. Nobody reached for the token.

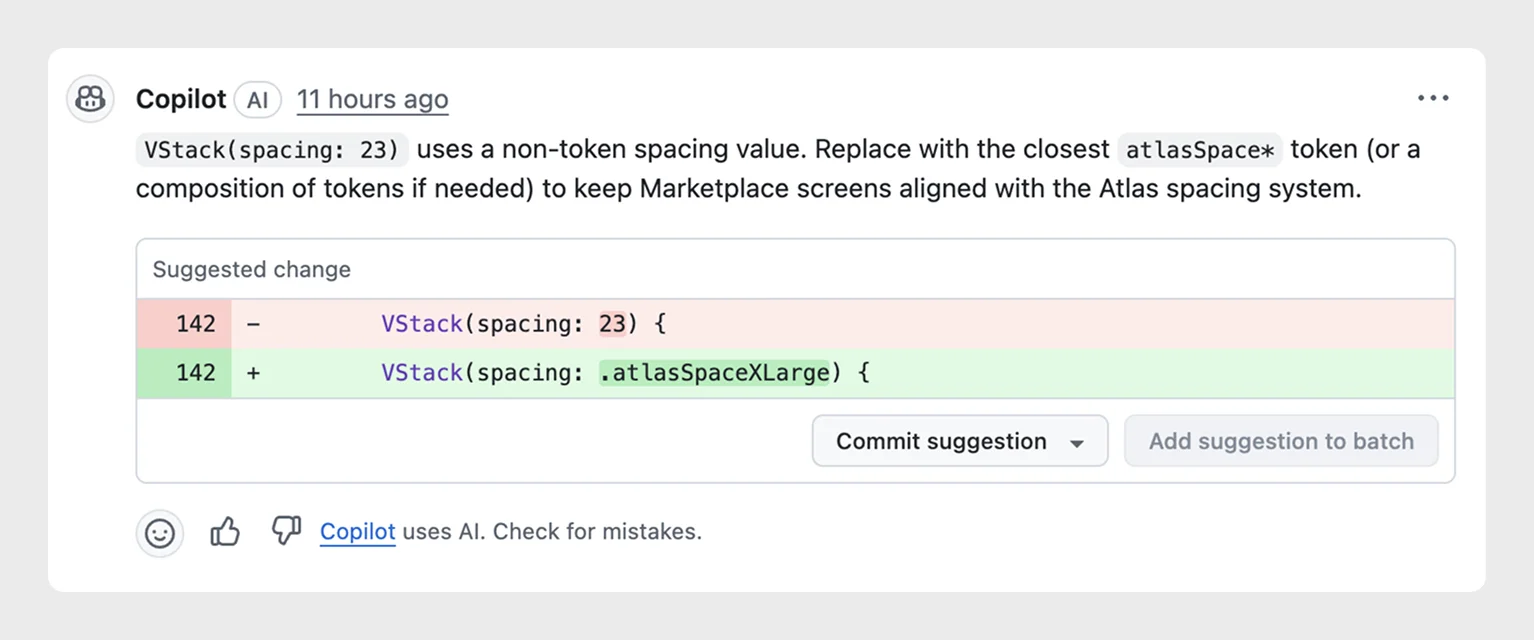

The other pattern: native defaults instead of Atlas modifiers. SwiftUI's stock .padding() instead of our spacing scale. Default CSS margins instead of our tokens. Each one a small drift — fifty of them in a week, on PRs already approved and merging.

The part that mattered

None of these would have been caught. Not in code review — we weren't on every PR, and the reviewers who were didn't have Atlas memorized. Not in audits — audits surface debt months after it lands. Not in documentation — docs tell you what's right; they don't catch what's wrong.

These fifty comments weren't bugs. They were design debt — invisible until somebody redesigned the component, or a designer caught drift in production and filed a ticket. By then cleanup costs ten times more.

The bot caught them at authoring, when fixing costs nothing.

Tuning the bot

We keep tuning the instructions. New patterns we catch slipping through become rules. New Atlas features we want adopted become rules. Every update reaches every team's PRs.

What an hour bought

Before the bot, we were the bottleneck. Engineers shipped code that drifted from the system, and we caught it weeks later in an audit — or not at all. Now feedback happens at authoring, before bad patterns settle.

An hour of instructions. Fifty surfaced debt items in week one. The pattern keeps going — every week, more catches, more drift prevented at the source.

Design systems exist to reduce friction for product teams. This bot reduces friction for the design systems team. Same principle, different layer. None of this is groundbreaking — plenty of teams are doing the same thing. But an hour of setup that saves us hours every week is worth doing anyway.